“Lorem ipsum dolor sit amet, consectetur adipiscing elit. Morbi volutpat.”

If you’re a parent with small children, you’ve probably taught them to “tie” their shoes by closing the Velcro straps. Someday, when they get older, maybe they’ll also learn how to tie shoelaces. You know, like their ancestors once did.

The question is, will they? Is learning to tie shoelaces a useful skill or just a remnant of an old and outdated technology that’s no longer relevant?

What about telling time on a clock with hands? Digital clocks are the norm, so much so that analog clock faces (a retronym) are generally just decorations, an optional look you can download to your Apple Watch for special occasions. Is reading a clock face a useful skill or a pointless carryover?

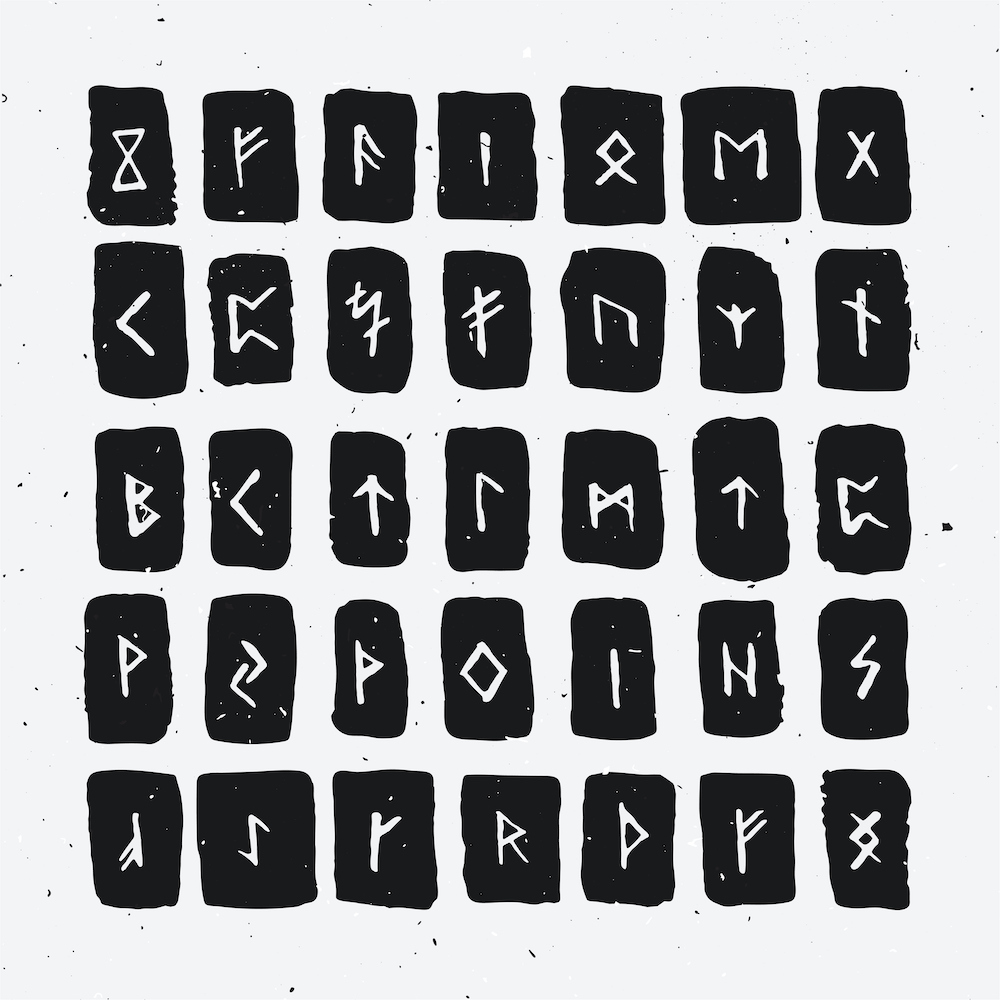

A friend mentioned that his son had started taking C++ programming classes in college. The son’s reaction was basically, “This isn’t programming! Python is programming. These are just primitive runes on ancient scrolls!”

He’s got a point. Programming languages have progressed an awful lot over the years. That’s a good thing. But with each new generation of languages, we leave an old generation behind. Is that a bad thing? Are we losing something – losing touch with what a computer really is and how it works?

Another friend made the opposite point. He’d started out learning high-level languages first, and hardware design later. He was utterly mystified that CPU chips couldn’t execute Python, HTML, Fortran, or any other language natively. How did this code get into that machine? There was no correlation that he could see. Some large and magic step was evidently missing. It wasn’t until he studied assembly language programming that the aha! moment came, and he went on to become a brilliant hardware/software engineer after that.

For him, learning that assembly language even existed, never mind using it effectively, was the bridge between understanding hardware and understanding software. Ultimately, every programming language boils down to assembly. (Which boils down to 1s and 0s, which are really electrical impulses, which represent electrons changing orbits, ad infinitum.)

Do we miss something – are we skipping an important step – by not learning assembly-language programming? Or is that just a pointless waste of time and a relic of an earlier and less efficient era? Does assembly belong in the same category as Greek, Latin, and proto-Indo-European languages, or is it foundational, like learning addition and subtraction before tackling simultaneous equations?

There’s no question that programming in assembly language enables you to build faster and smaller programs than a compiler can. That doesn’t mean you definitely will produce tighter code; only that you can. Compilers are inherently less efficient with hardware resources, but more efficient with your time. Good C compilers can produce code that looks almost like hand-written assembly, but they can also produce completely inscrutable function blocks. Compiler companies generally devote more time to their IDEs and other customer-facing features than they do to their code generators. Binary efficiency is not high on the list of must-have features.

As programmer extraordinaire Jack Ganssle points out, “In real-time systems interrupts reign, but their very real costs are disguised by simple C structures that hide the stacking and unstacking of the system’s context.”

Because compilers hide so much of the underlying hardware structure – and deliberately so – programmers can’t see the results of their actions. One line of C++ might translate into a dozen lines of assembly, or a few hundred. There’s no way to know, and most programmers won’t care. Sadly, many won’t even understand the difference. As you climb to higher levels of abstraction, the disconnect grows wider. Who can guess what one line of Ada will translate into at the machine level?

Fortunately, we hide this inefficiency with better hardware. Processors get faster, so we ask them to do more, that we may do less. Like an ermine-robed nobleman assigning work to his vassals, we command, “Don’t bother me with details. Just get it done!” The royal “we” becomes increasingly disconnected from the real work going on, in the hope that our minds may focus on more pressing and important issues.

In a historical fiction novel, the peons would rise up and overthrow their oppressive chief. I don’t think the CPUs are going to revolt anytime soon. Nor do I think we should abandon all high-level programming languages and revert to Mennonite tools or artisanal assembly methods. It’s just sad, and possibly counterproductive, that we’re stretching such a gap between what a computer does and what we ask it to do. That’s a recipe for frustration, inefficiency, code bloat, overheating, and very challenging debugging sessions.

Instead, I think all programmers, regardless of product focus or chosen language(s) should be trained in the assembly language of at least one processor. It doesn’t even have to be the processor they’re using – any one will do. A good understanding of RISC-V machine language (to pick one example) will make the point that printf() is not an intrinsic function on any machine. Just to be safe, Ada programmers should have to learn two different machines. And three for Java.

Leave a Reply

You must be logged in to post a comment.